Once upon a time, our world was simple. There was a thesis — “The Anatomy of a Large-Scale Hypertextual Web Search Engine” by Sergey Brin and Larry Page — that told us how Google worked. And while Google evolved rapidly from the concepts in that document, it still told us what we needed to know to rank highly in search.

As a community, we abused it — and many made large sums of money simply by buying links to their site. How could you expect any other result? Offer people a way to spend $2 and make $10, and guess what? Lots of people are going to sign up for that program.

But our friends at Google knew that providing the best search results would increase their market share and revenue, so they made changes continually to improve search quality and protect against attacks by spammers. A big part of what made this effort successful was obscuring the details of their ranking algorithm.

When reading the PageRank thesis was all you needed to do to learn how to formulate your SEO strategy, the world was simple. But Google has since been issued hundreds of patents, most of which have probably not been implemented and never will be. There may even be trade secret concepts for ranking factors for which patent applications have never been filed.

Yet, as search marketers, we still want to make things very simple. Let’s optimize our site for this one characteristic and we’ll get rich! In today’s world, this is no longer realistic. There is so much money to be had in search that any single factor has been thoroughly tested by many people. If there were one single factor that could be exploited for guaranteed SEO success, you would already have seen someone go public with it.

‘Lots of different signals’ contribute to rankings

Despite the fact that there is no silver bullet for obtaining high rankings, SEO professionals often look for quick fixes and easy solutions when a site’s rankings take a hit. In a recent Webmaster Central Office Hours Hangout, a participant asked Google Webmaster Trends Analyst John Mueller about improving his site content to reverse a drop in traffic that he believed to be the results of the Panda update from May of 2014.

The webmaster told Mueller that he and his team are going through the site category by category to improve the content; he wanted to know if rankings will improve category by category as well, or if there is a blanket score applied to the whole site.

Here is what Mueller said in response (emphases mine):

“For the most part, we’ve moved more and more towards understanding sections of the site better and understanding what the quality of those sections is. So if you’re … going through your site step by step, then I would expect to see … a gradual change in the way that we view your site. But, I also assume that if … you’ve had a low quality site since 2014, that’s a long time to … maintain a low quality site, and that’s something where I suspect there are lots of different signals that are … telling us that this is probably not such a great site.

(Note: Hat tip to Glenn Gabe for surfacing this.)

I want to draw your attention to the bolded part of the above comment. Doesn’t it make you wonder, what are the “lots of different signals?”

While it’s important not to over-analyze every statement by Googlers, this certainly does sound like the related signals would involve some form of cumulative user engagement metrics. However, if it were as simple as improving user engagement, it likely would not take a long time for someone impacted by a Panda penalty to recover — as soon as users started reacting to the site better, the issue would presumably fix itself quickly.

What about CTR?

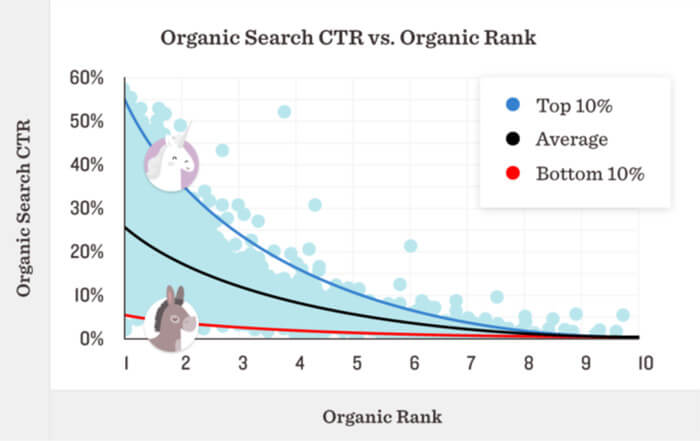

Larry Kim is passionate about the possibility that Google directly uses CTR as an SEO ranking factor. By the way, do read that article. It’s a great read, as it gives you tons of tips on how to improve your CTR — which is very clearly a good thing regardless of SEO ranking impact.

That said, I don’t think Google’s algorithm is as simple as measuring CTR on a search result and moving higher CTR items higher in the SERPs. For one thing, it would be far too easy a signal to game, and many industries that are well-known for aggressive SEO testing would have pegged this as a ranking factor and already made millions of dollars on this by now. Second of all, high CTR does not speak to the quality of the page that you’ll land on. It speaks to your approach to title and meta description writing, and branding.

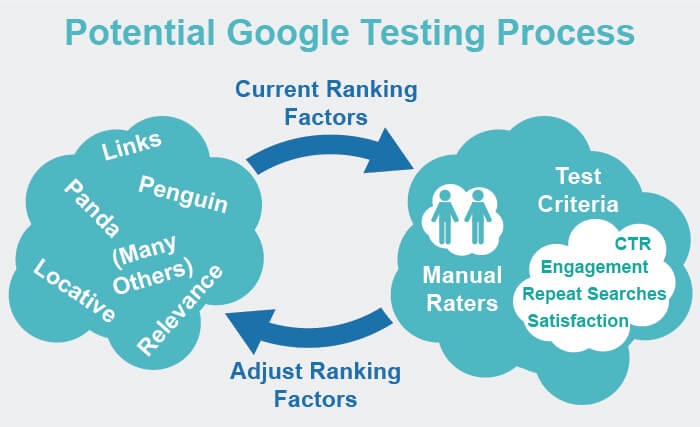

We also have the statements by Paul Haahr, a ranking engineer at Google, on how Google works. He gave the linked presentation at SMX West in March 2015. In it, he discusses how Google does use a variety of user engagement metrics in ranking. The upshot of it is that he said they are NOT used as a direct ranking factor, but instead, they are used in periodic quality control checks of other ranking factors that they use.

Here is a summary of what his statements imply:

- CTR, and signals like it, are NOT a direct ranking factor.

- Signals like content quality and links, and algorithms like Panda, Penguin, and probably hundreds of others are what they use instead (the “Core Signal Set”).

- Google runs a number of quality control tests on search quality. These include CTR and other direct measurements of user engagement.

- Based on the results of these tests, Google will adjust the Core Signal Set to improve test results.

The reason for this process is that it allows Google to run their quality control tests in a controlled environment where they are not easily subject to gaming of the algorithm, and it makes it far harder for black-hat SEOs to manipulate.

So is Larry Kim right? Or Paul Haahr? I don’t know.

Back to John Mueller’s comments for a moment

Looking back on the John Mueller statement I shared above, it strongly implies that there is some cumulative impact over time of generating “lots of different signals that are telling us that this is probably not such a great site.”

In other words, I’m guessing that if your site generates a lot of negative signals for a long time, it’s harder to recover, as you need to generate new positive signals for a sustained period of time to make up for the history that you’ve accumulated. Mueller also makes it seem like a gradated scale of some sort, where turning a site around will be “a long-term project where you’ll probably see gradual changes over time.”

[Read the full article on Search Engine Land.]

Some opinions expressed in this article may be those of a guest author and not necessarily Marketing Land. Staff authors are listed here.

About The Author

Popular Stories

Related Topics