Math is hard, even for Facebook. And it’s getting harder to trust Facebook’s math, even as the social network fine-tunes its calculations.

For the third timesince September, Facebook is disclosing new measurement errors. The two new errors affected the reaction counts Facebook reports on Pages’ Live videos, as well as the engagement figures Facebook reports for off-Facebook links; the latter link engagement metrics were recently used in investigations by BuzzFeed and The New York Times into fake news articles’ performance on Facebook.

In addition to acknowledging the two new errors — of which one has been corrected and one is still being inspected — Facebook has refined a measurement marketers may reference when buying ads through the social network.

None of the aforementioned metrics had any impact on how much money Facebook charges advertisers for their campaigns. But they may have informed brands’ Facebook ad-buying strategies as well as brands’, publishers’ and others’ Facebook-related content-publishing strategies.

Facebook should be given credit for being upfront about its mistakes and rectifying its errors. But the more measurement errors and corrections it discloses, the more difficult it becomes to trust Facebook’s measurements. It’s a dilemma that some brands and agencies have been wrestling with following the previous two measurement error disclosures. And it’s one that may not subside until Facebook allows independent firms to directly measure these previously faulty stats, rather than relying on Facebook for the raw, corrected data.

Okay, now for the details of what’s up with Facebook’s math this time.

Inconsistent engagement stats for off-Facebook links

Facebook’s Graph API and mobile app report mismatched engagement rates for outside links.

Facebook has confirmed a discrepancy exists between two measures of the Facebook engagement rates for outside links, but the company has not been able to identify the reason for the measurement anomaly.

I brought the error to Facebook’s attention a few weeks ago after I came across it while analyzing BuzzFeed’s recent investigation of fake news links’ performance on Facebook. (At the bottom of this post, I’ll get into how I found the error and how these stats can be accessed for any URL by anyone with a Facebook account).

The two metrics in question are: 1) the total number of shares, likes and comments a link has received on and off Facebook, reported through Facebook’s Graph API; and 2) the number of people who have shared or commented on that link, reported through search results in Facebook’s mobile apps.

The problem with those metrics: For some links — including ones examined by BuzzFeed — Facebook reported that the number of people who shared or commented on the link exceeded the sum of shares, likes and comments for that link. That shouldn’t happen, since the shares and comments stat reported in the mobile search results is a subset of the shares, likes and comments stat reported by the Graph API, a Facebook spokesperson confirmed. It’s unclear whether the inconsistent stats mean that Facebook’s Graph API is overcounting engagements or that its mobile search results are undercounting them.

Once Facebook determines why the measurement discrepancy exists, the company will notify people that its cause has been identified and the error has been corrected.

Misallocated reactions on Live videos

Facebook incorrectly reported the source of reactions on Pages’ Live videos.

Facebook has been incorrectly reporting the number of times people “reacted” to a Live video by clicking the like button or one of the corresponding “reactions” emojis while watching the live stream. To be clear, the total number of reactions, which appears publicly below a Live video post, has been accurate, but the breakdown of where those reactions occurred, which is shown only to Pages, has not been.

Within Facebook’s Page Insights analytics tools, Pages are able to see the number of reactions generated from the Live video broken down by whether the reactions happened while people were watching the live stream — “reactions on post” — or whether they were received on others’ posts that included the Live video — “reaction on shares” — such as when someone shares, or Facebook-retweeted, a Page’s Live video post to their friends and those friends clicked the like/reaction button on the friend’s post after the live stream had already ended.

The “reactions on post” number was supposed to only measure one reaction per individual live viewer, and it did. But people may react multiple times to a live video, such as by clicking “haha” each time the stream makes them laugh. Facebook incorrectly included those extra reactions to the “reactions on shares” figure, even though they weren’t reactions on shared posts.

In addition to correcting the reactions misallocation, Facebook’s “reactions on post” metric will no longer count only one reaction per viewer. Instead it will also count those extra reactions that had been incorrectly counted as “reactions on shares.” Facebook will no longer offer a measurement of viewers that reacted to a live stream at least once, according to a Facebook spokesperson. As a result, any Pages that had been tracking the “reactions on post” metric for their past live streams might as well do away with that metric’s historical data. The comparison will no longer be apples to apples, but instead apples to watermelons.

The revised Live reaction breakdowns will be applied to new Live videos beginning in mid-December. They are expected to result in a 500 percent average increase in the number of “reactions on post” and a 25 percent average decrease in the number of “reactions on shares,” according to Facebook.

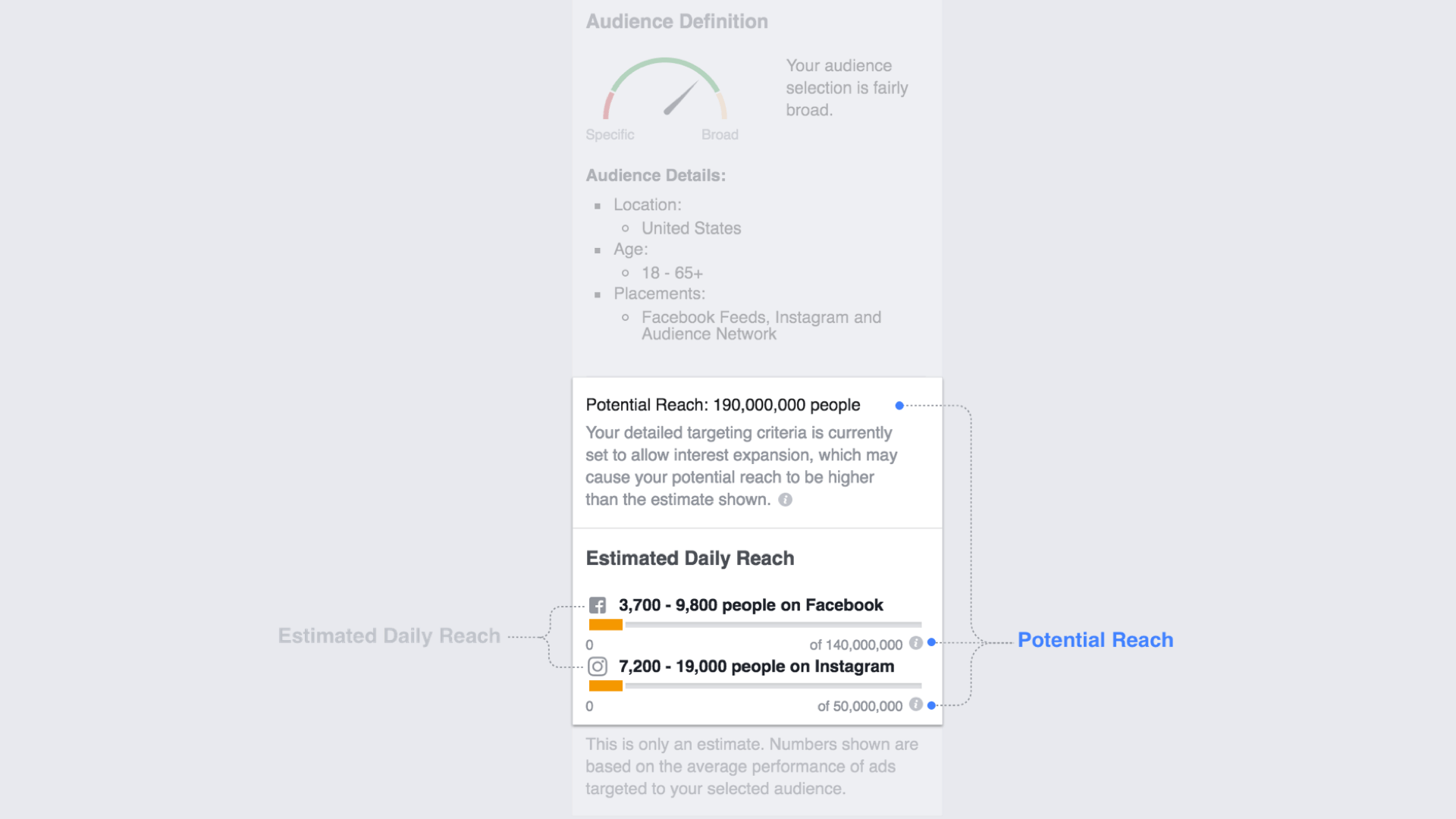

Ads’ estimated reach becoming less of a guesstimate

Facebook is making its estimates of ads’ potential reach more precise.

Facebook is honing how it estimates the number of people an advertiser’s campaign will reach.

Before I explain how these metrics are changing, let me be clear about what the metrics are and are not. The estimated total reach and estimated daily reach figures are hypotheses for how many people may be exposed to an advertiser’s campaign. They are not how many people were, in fact, exposed to the campaign. These estimates, presented when a brand is in the process of creating a campaign using Facebook’s ad creation tool and before the ad buy has been ordered, are supposed to help an advertiser judge how defined its target audience should be and how that targeting affects the number of people who may see its ad.

These estimates will remain an estimate, but Facebook is making them less of a guesstimate.

Previously, Facebook took an advertiser’s defined target audience, looked at the behavior for a smaller sample of people in that audience — like how likely they were to check Facebook, Instagram or a site or app in Facebook’s Audience Network ad network on a given day — and projected that behavior for everyone in that audience. The process bore similarities to an election poll that takes a representative sample of expected voters to forecast how the rest of the country may vote. To make its estimates more precise, Facebook will rely less on these projections. Instead it will rely on what it knows about the behavior for the full target audience and use that more complete view to gauge a campaign’s potential reach.

As a result of the methodology change, Facebook’s audience estimates may fluctuate by less than 10 percent from the prognostications it had previously provided advertisers, according to the company.

The backstory on Facebook’s link engagements discrepancy

As I mentioned above, I came across the error between Facebook’s two link engagement metrics when I wanted to take a closer look at BuzzFeed’s fake news investigation.

BuzzFeed evaluated the fake news articles’ performance based on the aggregate number of shares, likes and comments the links to those articles received on Facebook. BuzzFeed had looked at all of the engagement categories combined, and I wanted to see if there was a way to zero in on the articles’ share counts. I figured checking the share counts specifically could signal how the news feed algorithm change Facebook announced in June — which prioritized sharing for organic Page post distribution — may have impacted the spread of fake news articles on Facebook.

BuzzFeed used a third-party analytics tool, BuzzSumo, to access and collect these stats, but the figures are actually open to anyone with a Facebook account. All you have to do is paste a link URL into Facebook’s Graph API Explorer tool, click submit, and Facebook will spit out the total number of shares, likes and comments for that link, including the number of people pasting the link into a status update posted to Facebook. (Confusingly, the resulting sum is categorized as “share_count,” but Facebook’s Graph API documentation defines the stat as the total number of shares, likes and comments for that link).

But the Graph API would only give me updated versions of the figures BuzzFeed already cited, and I wanted to isolate the share count to see what percentage of those total engagements may have carried more weight with Facebook’s algorithm. Luckily, an article published by The New York Times — coincidentally also about fake news — showed me I could access that stat, or what seemed like a close proxy. The New York Times article showed that you can paste a link URL into the search bar in Facebook’s mobile app, and Facebook will show you the number of people who have shared that link. A Facebook spokesperson later clarified that this stat is measure of how many people shared the link on Facebook or commented on posts that included the link.

For 20 fake news links and 20 real news links from BuzzFeed’s investigation — the August to Election Day groups — I pulled the total number of engagements from Facebook’s Graph API and the total number of people who shared the link from Facebook’s mobile search results. And that’s when I saw something weird. For three of those 40 links — to NBC News, Breitbart and Liberty News — Facebook said that more people had shared or commented them than had shared or liked or commented on them. And the discrepancies weren’t small. At the time I checked, the NBC News link received 407,708 shares and comments , per the mobile search results, but 237,881 shares and comments and likes, per the Graph API results.

Since Facebook is still figuring out why there’s a deviation between the link engagement counts accessed through the Graph API and the ones accessed through mobile search, it’s hard to say which of the two stats is flawed, if not both.